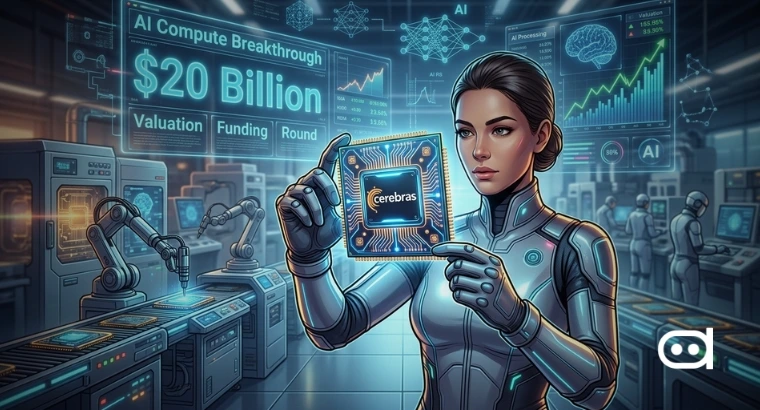

- OpenAI is reportedly agreed to spend more than $20 billion on AI chips from Cerebras Systems in a multi-year deal that ramps up its compute capacity.

- The agreement is expected to roll out through the late 2020s and it could give OpenAI up to a 10% equity stake in Cerebras through warrants.

- This move signals a strategic push to lock in long-term AI infrastructure and cut dependence on dominant chip suppliers.

The reported deal between OpenAI and Cerebras Systems marks a clear shift in how top AI companies are thinking about growth. They’re not just focused on making smarter models anymore; the real competition is now in the infrastructure that powers them. With demand for AI services booming around the world, having reliable and scalable compute is now a top priority. This new deal seems to build off an earlier partnership, and by tying sourcing to potential ownership, OpenAI isn’t simply buying capacity; it’s digging deeper into the supply chain.

OpenAI’s Strategic Plan towards Owning Compute

What makes this so important is not just the huge investment, it is the business model behind it. Tech companies have always depended on third-party suppliers for hardware, fighting for the resources that are available. But with AI getting more powerful and workloads growing complicated, that old approach is not enough.

OpenAI is committing billions to Cerebras, basically reserving a heavy amount of future compute capacity. The equity part makes the connection even tighter, aligning both companies’ incentives and possibly giving OpenAI a say on future tech decisions.

The move also shows how the AI sector wants to break away from relying on just one big chipmaker. Betting everything on a single supplier is risky, so now alternative architectures are getting more attention. Cerebras, famous for its unique chip design, stands to gain a lot from this deal, especially if it is looking for future growth and a public offering.

The deal highlights just how capital-heavy the AI race has become. Locking down infrastructure at this level takes serious financial muscle, and it could widen the gap between industry giants and everyone else. It also shows that partnerships in this era aren’t the old customer-suppliers, they are blending the lines between buyer, investor, and strategic partner.

How Other AI Firms are Tackling the Chip Shortage

OpenAI is not the only one reworking its hardware game plan. Major firms across the industry are trying all kinds of strategies to get around chip shortages and soaring costs. One popular move is to build their own silicon; Meta, for example, is pouring resources into custom AI chips designed just for its own needs, teaming up with companies like Broadcom to make it happen. Moreover, Anthropic has made a deal with Google for lots of TPU capacity.

Cloud options are also becoming a bigger piece of the puzzle. Tons of AI companies are spreading out by renting compute across different platforms, tapping into Google TPUs and AWS’s custom chips instead of sticking to just GPUs. All these tactics show where things are headed. Rather than scrapping over a limited pool of chips, companies are recreating the playing field with custom hardware, smart partnerships, and hybrid infrastructure.

Also read: Anthropic To Join the Race to Build its own Custom AI Chips

Wrapping Up

This reported deal between OpenAI and Cerebras Systems highlights a much bigger shift happening across AI. As AI systems get bigger and more involved in daily life, the demand for fast, dependable compute is growing. So, companies are not just satisfied with access to hardware anymore, they want a real stake in the actual infrastructure powering their models.