The fast development of large language models has changed how companies and developers create AI-powered software applications. These models power intelligent systems, ranging from chatbots to enterprise automation solutions. However, their intricate requirements, like storage and processing power, are creating massive operational difficulties.

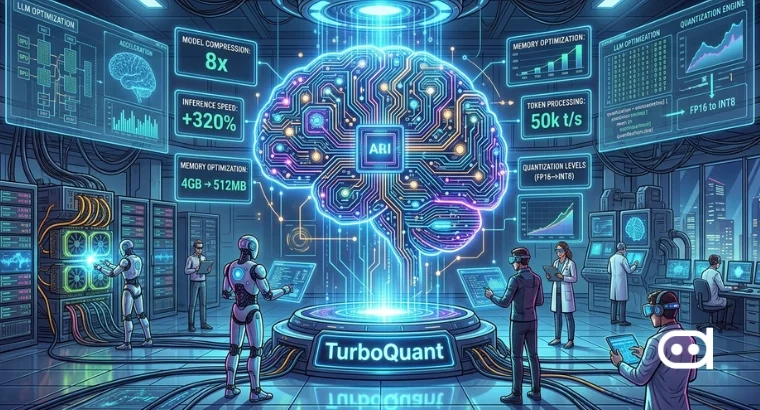

The solution to this problem appears through the development of Google TurboQuant as a fundamental technological advancement. TurboQuant AI improves system performance through its advanced compression methods, maintaining system accuracy during performance development.

What is TurboQuant?

TurboQuant LLM, developed by Google in March 2026, operates as an advanced two-stage vector compression system that achieves significant memory reduction and faster inference times for large language models and vector search engines. The system solves the memory wall problem by reducing Key-Value caches to 3 bits, which maintains accuracy and enables efficient long-context AI processing. It transforms high-precision numerical values into lower-bit formats through TurboQuant quantization, which decreases model size and increases processing speed.

The Google TurboQuant compression method operates according to current LLM architecture requirements, which it uses to perform efficient processing on small system resources. The system enables developers to build advanced artificial intelligence systems that operate with standard computing equipment. TurboQuant helps establish new pathways for developing artificial intelligence systems through its implementation in various AI compression techniques. The method works together with pruning and distillation methods to establish an all-encompassing system that boosts model performance while maintaining output standards.

Why Optimization is Important for Large Language Models

Growing Complexity of LLMs

Modern LLM experts build systems that contain multiple billion parameters. The system gains advanced capabilities through additional complexity, but developers face increased challenges for efficient model operation and deployment. The system experiences major operational delays because even small performance issues become critical when operating large-scale systems.

Cost and Infrastructure Challenges

Large model operations require expensive GPUs and memory, resulting in high power requirements. The infrastructure requirements create high costs for AI deployment, which particularly impacts startups and small businesses.

Need for Efficient Model Deployment

AI solutions require resource optimization and speed enhancements to achieve practical application in real-life environments. Efficient deployment enables systems to operate at high speeds while providing access to all users on various devices. Real-time interactive systems such as conversational AI and recommendation engines depend on this capability for operation.

How TurboQuant Works

Quantization Techniques

The primary function of TurboQuant machine learning involves minimizing model weight precision through quantization techniques. It transforms 32-bit floating point assets into 8-bit and 4-bit binary formats instead of maintaining their original high-precision state. The system achieves substantial memory reductions while maintaining complete accuracy. The advanced methods protect essential data throughout the entire conversion procedure.

Model Compression

TurboQuant for LLMs achieves model compression through operational effectiveness. The system achieves improved model deployment efficiency through its parameter size reduction, resulting in faster loading times and more efficient storage capacity. The compression method enables the simultaneous operation of multiple models on shared hardware resources.

Computational Efficiency

The primary benefit of Google’s new algorithm provides users with enhanced computational productivity. TurbQuant decreases the computation needed for inference, which results in faster responses and reduced waiting periods. Real-time AI systems, such as chatbots and recommendation systems, depend on this particular aspect. The system enhances energy efficiency, which leads to more sustainable AI implementation.

Key Features of TurboQuant

- Extreme Compression with Zero Accuracy Loss: TurboQuant LLM compresses KV cache data to ~3 bits (over 6x reduction) without requiring retraining or fine-tuning, maintaining near-perfect performance in benchmarks like “Needle in a Haystack”.

- Two-Stage Compression (PolarQuant + QJL):

–PolarQuant (Stage 1) – Rotates data vectors and maps them into polar coordinates, which allows high-quality data compression through simple data geometry presentation. The system eliminates the need for per-block normalization constant storage through its data compression

–QJL (Quantized Johnson-Lindenstrauss) (Stage 2) – It uses a 1-bit mathematical error checker, which operates on theoretical foundations to remove both bias and error from first-stage results.

- Data-Oblivious Operation: Unlike other quantization methods that require calibration on specific datasets, TurboQuant is data-oblivious, meaning it works out of the box without needing to analyze model training data or perform time-consuming preprocessing.

- Significant Inference Speedups: Google TurboQuant performs better at inference tasks because it accelerates attention logit computations, which achieve 8x faster results than using 32-bit unquantized keys on NVIDIA H100 GPU accelerators.

- Theoretical Optimality: The algorithm achieves its theoretical optimality through mathematical proofs that demonstrate its close proximity to compression distortion lower bounds.

- Efficient Vector Search (RAG): It enables efficient vector search through RAG which TurboQuant uses to achieve zero indexing delays in high-dimensional vector databases while delivering superior recall rate results compared to existing Product Quantization baseline methods.

Benefits of Using TurboQuant for LLMs

Improved Model Performance

TurboQuant AI, through its model optimization methods, enhances data processing. The system delivers results at high speed while sustaining accurate outcomes and enhancing the reliability of AI systems. It also delivers response time measurement, which the system requires for operation.

Lower Hardware Requirements

The system needs less memory capacity and processing power to enable TurboQuant LLM to function on basic computing systems. It enables more people and organizations to access AI technology, which includes users who have restricted technological resources.

Cost Efficiency

The platform runs optimized models that need fewer operational resources, resulting in decreased operational costs and infrastructure expenses. It also helps businesses that operate extensive AI networks because it increases return on investment while decreasing future financial obligations.

Scalable AI Applications

Google TurboQuant enables organizations to expand their AI applications across multiple environments, which include cloud platforms and edge devices, while maintaining system efficiency. The system provides flexible architectural support for various deployment options and operational requirements.

Use Cases of TurboQuant in AI Applications

AI Chatbots and Virtual Assistants

TurboQuant enables chatbots to provide quicker and more responsive service by decreasing wait times and boosting their operational capabilities. The system brings substantial benefits to users who operate applications during peak usage times.

AI-powered Search Systems

The search engine uses Google TurboQuant to process user queries with better efficiency, leading to speedier results and more precise outcomes for users. The search system improvements lead to faster results, which provide better matching between user needs and search results.

Enterprise AI Solutions

Businesses implement TurboQuant AI to enhance their operational efficiency by optimizing data analysis processes, automation functions, and customer support operations, which results in cost savings. The platform enables users to handle intricate processes that require them to handle extensive data sets.

Edge AI Applications

Google TurboQuant LLM allows artificial intelligence models to function on edge computing devices, including smartphones and Internet of Things devices. The system decreases the dependence on cloud-based systems while providing essential real-time processing capabilities, which smart assistants and self-driving systems need to operate effectively.

Future of TurboQuant in AI Development

As LLMs reach their peak capabilities and become more common, the need for efficient AI systems keeps rising. TurboQuant AI will help develop future systems because it makes advanced models simpler and more efficient for use. Future developments in quantization methods will decrease memory consumption while enhancing precision. Integrating new hardware accelerators will create additional performance improvements. TurboQuant will help organizations achieve faster and more affordable AI implementation through its function in current AI compression methods. The optimization process of TurboQuant will create the foundation for developing AI systems that can expand sustainably while maintaining operational efficiency across all settings.